昇思25天学习打卡营第22天|文本解码原理

【代码】昇思25天学习打卡营第22天|文本解码原理。

I love this section, for most popular model's principles are explained here (models in NLP).

We explain the text decoder principle by MindNLP.

auto-regression Language model:

the method supplied by MindNLP:

Greedy_search:

Beam search:

keep several top probable predictions in each step: (num_beams = 2)

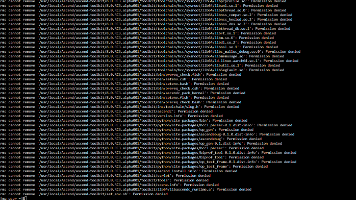

tokenizer = GPT2Tokenizer.from_pretrained('iiBcai/gpt2',mirror = 'modelscope')

model = GPT2MHeadModel.from_pretrained('iiBcai/gpt2',pad_token_id = tokenizer.eos_token_id, mirror = 'modelscope')

input_ids = tokenizer.encode('I enjoy walking with my cute dog', return_tensors = 'ms')

beam_output = model.generate(

input_ids,

max_length=50,

num_beams = 5,

early_stopping = True

)

print('Output:\n ' + 100 * '-')

print(tokenizer.decode(beam_output[0], skip_special_tokens = True))

print(100 * '-')

beam_output = model.generate(

input_ids,

max_length = 50,

num_beams =5,

no_repeat_ngram_size = 2,

early_stopping = True

)

print('Beam search with ngram, Output:\n' + 100* '-')

print(tokenizer.decode(beam_output[0], skip_special_token = True))

print(100*'-')

beam_output = model.generate(

input_ids,

max_length = 50,

num_beams = 5,

no_repeat_ngram_size = 2,

num_return_sequences = 5,

early_stopping =True

)

print('return_num_sequences, Output :\n' + 100 * '-')

for i, beam_output in enumerate(beam_outputs):

print("{}:{}".format(i, tokenizer.decode(beam_output, skip_special_tokens = True)))

print(100 * '-')

good output:

n-gram:an n-gram model predicts the occurrence of a word based on the occurrence of its previous n - 1 words, making it a type of Markov model.

no_repeat_ngram_size :set those words to have probability 0 to appear more than n times

sample:

randomly choose output word according to the conditional distribution now

temperature:if high you can imagine that all hopeful output waiting to be choose look less different(i.e choose more randomly), otherwise they varies in probablity to be choose to a greater extent

mindspore.set_seed(1234)

sample_output = model.generate(

input_ids,

do_sample = True,

max_length = 50,

top_k = 0,

temperature= 0.7

)TopK sample : choose those topK words and normalize them to choose the sample again

Top-P sample:

choose those words whose probablity > p

and normalize them to sample again

![]()

combine topk and topp

sample_outputs = model.generate(

input_ids,

do_samples = True,

max_length = 50,

top_k = 5,

top_p = 0.95,

num_return_sequences = 3

)

print('Output:\n' + 100*'-')

for i, sample_output in enumerate(sample_outputs):

print("{} : {}".format(i, tokenizer.decode(sample_output,skip_special_tokens = True)))

鲲鹏昇腾开发者社区是面向全社会开放的“联接全球计算开发者,聚合华为+生态”的社区,内容涵盖鲲鹏、昇腾资源,帮助开发者快速获取所需的知识、经验、软件、工具、算力,支撑开发者易学、好用、成功,成为核心开发者。

更多推荐

已为社区贡献23条内容

已为社区贡献23条内容

所有评论(0)