MindIE大模型测试及报错Fatal Python error: PyThreadState_Get: the function must be called with the GIL held,

执行nohup ./g.sh > ./g.log &后台下载即可。可以编辑shell文件,把链接都提取存好后台执行。

大模型文件下载参考地址:

可以编辑shell文件,把链接都提取存好后台执行。

例如:

g.sh内容如下:

wget https://www.modelscope.cn/models/Qwen/Qwen2.5-72B-Instruct/resolve/master/configuration.json

wget https://www.modelscope.cn/models/Qwen/Qwen2.5-72B-Instruct/resolve/master/vocab.json

wget https://www.modelscope.cn/models/Qwen/Qwen2.5-72B-Instruct/resolve/master/tokenizer.json

wget https://www.modelscope.cn/models/Qwen/Qwen2.5-72B-Instruct/resolve/master/tokenizer_config.json

wget https://www.modelscope.cn/models/Qwen/Qwen2.5-72B-Instruct/resolve/master/README.md

wget https://www.modelscope.cn/models/Qwen/Qwen2.5-72B-Instruct/resolve/master/generation_config.json

wget https://www.modelscope.cn/models/Qwen/Qwen2.5-72B-Instruct/resolve/master/LICENSE

执行nohup ./g.sh > ./g.log &后台下载即可。

下载完成后务必检查g.log

cat g.log|grep save确认都正常下载。遇到的问题就是文件不完整导致后边遇到莫名奇妙的问题。

关于MindIE的参考:

产品版本信息-版本说明-MindIE1.0.RC3开发文档-昇腾社区

MindIE Service Deployment — MindSpore master documentation

华为镜像仓库使用MindIE:

遇到的问题:

华为arm npu环境的论坛:

1.https://www.hiascend.com/forum/thread-02105166327838594152-1-1.html

2.

https://www.hiascend.com/forum/thread-0290164564141262022-1-1.html

3.

https://www.hiascend.com/forum/thread-0296165487498000083-1-1.html

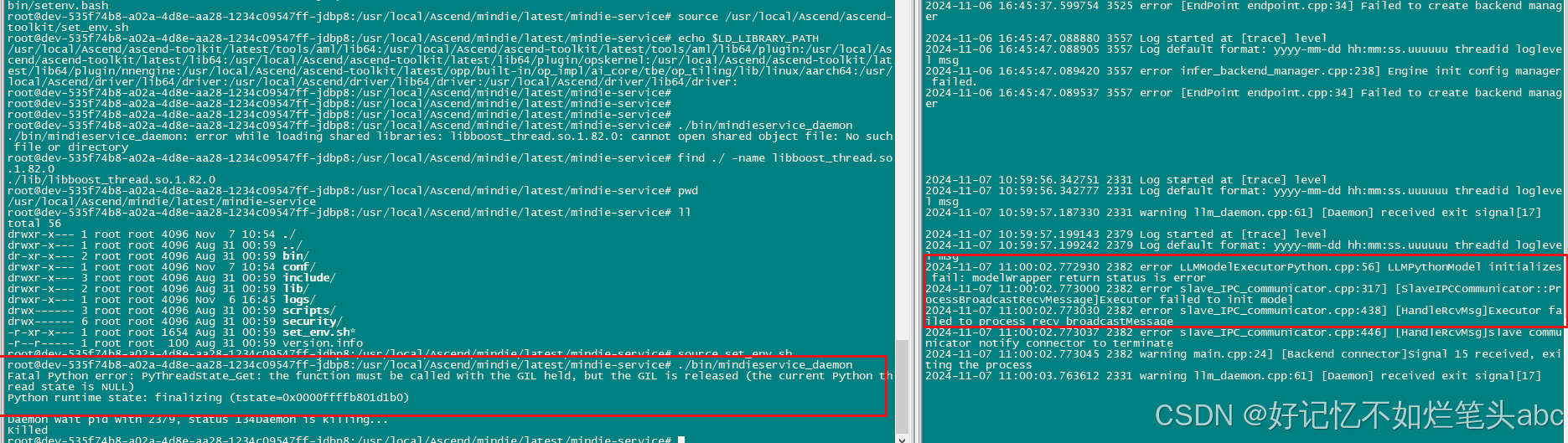

运行报错过程及处理:(已解决,就是模型文件下载不完整导致,处理过程见最后)

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# export LD_LIBRARY_PATH=/usr/local/Ascend/driver/lib64/driver:$LD_LIBRARY_PATH

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# ./bin/mindieservice_daemon

./bin/mindieservice_daemon: error while loading shared libraries: libsecurec.so: cannot open shared object file: No such file or directory

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# export LD_LIBRARY_PATH=/usr/local/Ascend/driver/lib64/driver:$LD_LIBRARY_PATH

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# ./bin/mindieservice_daemon

./bin/mindieservice_daemon: error while loading shared libraries: libsecurec.so: cannot open shared object file: No such file or directory

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# find / -name libsecurec.so

find: '/sys/kernel/slab/dentry/cgroup/dentry(9886462:user@0.service)': No such file or directory

/usr/local/Ascend/ascend-toolkit/latest/aarch64-linux/lib64/libsecurec.so

/usr/local/Ascend/ascend-toolkit/8.0.RC2/aarch64-linux/lib64/libsecurec.so

/usr/local/Ascend/driver/lib64/common/libsecurec.so

find: '/proc/749/map_files': Permission denied

find: '/proc/750/map_files': Permission denied

find: '/proc/760/map_files': Permission denied

find: '/proc/761/map_files': Permission denied

find: '/proc/1199/map_files': Permission denied

find: '/proc/1227/map_files': Permission denied

find: '/proc/1228/map_files': Permission denied

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# source /usr/local/Ascend/driver/bin/setenv.bash

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# source /usr/local/Ascend/ascend-toolkit/set_env.sh

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# echo $LD_LIBRARY_PATH

/usr/local/Ascend/ascend-toolkit/latest/tools/aml/lib64:/usr/local/Ascend/ascend-toolkit/latest/tools/aml/lib64/plugin:/usr/local/Ascend/ascend-toolkit/latest/lib64:/usr/local/Ascend/ascend-toolkit/latest/lib64/plugin/opskernel:/usr/local/Ascend/ascend-toolkit/latest/lib64/plugin/nnengine:/usr/local/Ascend/ascend-toolkit/latest/opp/built-in/op_impl/ai_core/tbe/op_tiling/lib/linux/aarch64:/usr/local/Ascend/driver/lib64/driver:/usr/local/Ascend/driver/lib64/driver:/usr/local/Ascend/driver/lib64/driver:

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service#

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service#

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service#

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service#

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# ./bin/mindieservice_daemon

./bin/mindieservice_daemon: error while loading shared libraries: libboost_thread.so.1.82.0: cannot open shared object file: No such file or directory

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# find ./ -name libboost_thread.so.1.82.0

./lib/libboost_thread.so.1.82.0

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# pwd

/usr/local/Ascend/mindie/latest/mindie-service

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# ll

total 56

drwxr-x--- 1 root root 4096 Nov 7 10:54 ./

drwxr-x--- 1 root root 4096 Aug 31 00:59 ../

dr-xr-x--- 2 root root 4096 Aug 31 00:59 bin/

drwxr-x--- 1 root root 4096 Nov 7 10:54 conf/

drwxr-x--- 3 root root 4096 Aug 31 00:59 include/

drwxr-x--- 2 root root 4096 Aug 31 00:59 lib/

drwxr-x--- 1 root root 4096 Nov 6 16:45 logs/

drwx------ 3 root root 4096 Aug 31 00:59 scripts/

drwx------ 6 root root 4096 Aug 31 00:59 security/

-r-xr-x--- 1 root root 1654 Aug 31 00:59 set_env.sh*

-r--r----- 1 root root 100 Aug 31 00:59 version.info

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# source set_env.sh

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# ./bin/mindieservice_daemon

Fatal Python error: PyThreadState_Get: the function must be called with the GIL held, but the GIL is released (the current Python thread state is NULL)

Python runtime state: finalizing (tstate=0x0000ffffb801d1b0)

Daemon wait pid with 2379, status 134Daemon is killing...

Killed

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# env | grep ASCEND_HOME_PATH

ASCEND_HOME_PATH=/usr/local/Ascend/ascend-toolkit/latest

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# env | grep ATB_SPEED_HOME_PATH

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# env | grep ATB_HOME_PATH

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# ./bin/mindieservice_daemon

Fatal Python error: PyThreadState_Get: the function must be called with the GIL held, but the GIL is released (the current Python thread state is NULL)

Python runtime state: finalizing (tstate=0x0000ffffb001d1b0)

Daemon wait pid with 4336, status 134Daemon is killing...

Killed

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# echo $ATB_SPEED_HOME_PATH

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# source /usr/local/Ascend/ascend-toolkit/set_env.sh

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# echo $ATB_SPEED_HOME_PATH

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# source /usr/local/Ascend/nnal/atb/set_env.sh

root@dev-8242526b-01f2-4a54-b89d-f6d9c57c692d-qjhpf:/usr/local/Ascend/mindie/latest/mindie-service# source /usr/local/Ascend/mindie/latest/mindie-llm/set_env.sh

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service#

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# echo $ATB_SPEED_HOME_PATH

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# source /usr/local/Ascend/llm_model/set_env.sh

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service#

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service#

Display all 836 possibilities? (y or n)

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service#

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# echo $ATB_SPEED_HOME_PATH

/usr/local/Ascend/llm_model

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# env | grep ATB_HOME_PATH

ATB_HOME_PATH=/usr/local/Ascend/nnal/atb/latest/atb/cxx_abi_0

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# env | grep ATB_SPEED_HOME_PATH

ATB_SPEED_HOME_PATH=/usr/local/Ascend/llm_model

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# ./bin/mindieservice_daemon

2024-11-07 11:14:10,906 [INFO] [pid: 5615] env.py-55: {'use_ascend': True, 'max_memory_gb': None, 'reserved_memory_gb': 3, 'skip_warmup': False, 'visible_devices': None, 'use_host_chooser': True, 'bind_cpu': True}

2024-11-07 11:14:12,689 [INFO] [pid: 5615] cpu_binding.py-206: rank_id: 0, device_id: 0, numa_id: 2, shard_devices: [0], cpus: [48, 49, 50, 51, 52, 53, 54, 55, 56, 57, 58, 59, 60, 61, 62, 63, 64, 65, 66, 67, 68, 69, 70, 71]

2024-11-07 11:14:12,691 [INFO] [pid: 5615] cpu_binding.py-231: process 5615, new_affinity is [48, 49, 50, 51, 52, 53, 54, 55, 56, 57, 58, 59, 60, 61, 62, 63, 64, 65, 66, 67, 68, 69, 70, 71], cpu count 24

The argument `trust_remote_code` is to be used with Auto classes. It has no effect here and is ignored.

Special tokens have been added in the vocabulary, make sure the associated word embeddings are fine-tuned or trained.

2024-11-07 11:14:13,560 [INFO] [pid: 5615] logging.py-53: model_runner.quantize: None

, model_runner.kv_quant: None

, model_runner.dytpe: torch.bfloat16

2024-11-07 11:14:13,560 [INFO] [pid: 5615] logging.py-53: Rank table file location:

[W compiler_depend.ts:623] Warning: expandable_segments currently defaults to false. You can enable this feature by `export PYTORCH_NPU_ALLOC_CONF = expandable_segments:True`. (function operator())

2024-11-07 11:14:19,719 [INFO] [pid: 5615] dist.py-94: initialize_distributed has been Set

2024-11-07 11:14:19,722 [INFO] [pid: 5615] logging.py-53: init tokenizer done: Qwen2TokenizerFast(name_or_path='/home/apulis-dev/teamdata/qwen2.5-72B-Instruct', vocab_size=151643, model_max_length=131072, is_fast=True, padding_side='left', truncation_side='right', special_tokens={'eos_token': '<|im_end|>', 'pad_token': '<|endoftext|>', 'additional_special_tokens': ['<|im_start|>', '<|im_end|>', '<|object_ref_start|>', '<|object_ref_end|>', '<|box_start|>', '<|box_end|>', '<|quad_start|>', '<|quad_end|>', '<|vision_start|>', '<|vision_end|>', '<|vision_pad|>', '<|image_pad|>', '<|video_pad|>']}, clean_up_tokenization_spaces=False), added_tokens_decoder={

151643: AddedToken("<|endoftext|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151644: AddedToken("<|im_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151645: AddedToken("<|im_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151646: AddedToken("<|object_ref_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151647: AddedToken("<|object_ref_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151648: AddedToken("<|box_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151649: AddedToken("<|box_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151650: AddedToken("<|quad_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151651: AddedToken("<|quad_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151652: AddedToken("<|vision_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151653: AddedToken("<|vision_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151654: AddedToken("<|vision_pad|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151655: AddedToken("<|image_pad|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151656: AddedToken("<|video_pad|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151657: AddedToken("<tool_call>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151658: AddedToken("</tool_call>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151659: AddedToken("<|fim_prefix|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151660: AddedToken("<|fim_middle|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151661: AddedToken("<|fim_suffix|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151662: AddedToken("<|fim_pad|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151663: AddedToken("<|repo_name|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151664: AddedToken("<|file_sep|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

}

Fatal Python error: PyThreadState_Get: the function must be called with the GIL held, but the GIL is released (the current Python thread state is NULL)

Python runtime state: finalizing (tstate=0x0000ffffa401d6d0)

Daemon wait pid with 5615, status 134Daemon is killing...

Killed

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service#

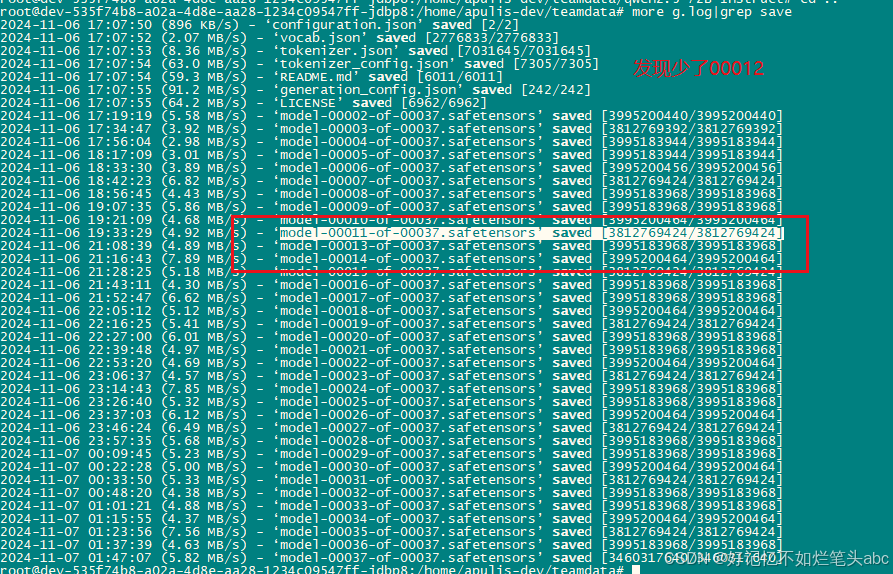

找到故障原因了:是模型文件下载不完整导致。

仔细检查下载cat g.log|grep save日志,发现少了00012这个文件,对比文件大小,发现明显小于其它模型文件。

正确的输出结果:

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/llm_model# more /usr/local/Ascend/mindie/latest/mindie-service/conf/config.json

{

"OtherParam" :

{

"ResourceParam" :

{

"cacheBlockSize" : 128

},

"LogParam" :

{

"logLevel" : "Info",

"logPath" : "logs/mindservice.log"

},

"ServeParam" :

{

"ipAddress" : "127.0.0.1",

"managementIpAddress" : "127.0.0.2",

"port" : 1025,

"managementPort" : 1026,

"maxLinkNum" : 1000,

"httpsEnabled" : false,

"tlsCaPath" : "security/ca/",

"tlsCaFile" : ["ca.pem"],

"tlsCert" : "security/certs/server.pem",

"tlsPk" : "security/keys/server.key.pem",

"tlsPkPwd" : "security/pass/mindie_server_key_pwd.txt",

"tlsCrl" : "security/certs/server_crl.pem",

"managementTlsCaFile" : ["management_ca.pem"],

"managementTlsCert" : "security/certs/management_server.pem",

"managementTlsPk" : "security/keys/management_server.key.pem",

"managementTlsPkPwd" : "security/pass/management_mindie_server_key_pwd.txt",

"managementTlsCrl" : "security/certs/management_server_crl.pem",

"kmcKsfMaster" : "tools/pmt/master/ksfa",

"kmcKsfStandby" : "tools/pmt/standby/ksfb",

"multiNodesInferPort" : 1120,

"interNodeTLSEnabled" : true,

"interNodeTlsCaFile" : "security/ca/ca.pem",

"interNodeTlsCert" : "security/certs/server.pem",

"interNodeTlsPk" : "security/keys/server.key.pem",

"interNodeTlsPkPwd" : "security/pass/mindie_server_key_pwd.txt",

"interNodeKmcKsfMaster" : "tools/pmt/master/ksfa",

"interNodeKmcKsfStandby" : "tools/pmt/standby/ksfb"

}

},

"WorkFlowParam" :

{

"TemplateParam" :

{

"templateType" : "Standard",

"templateName" : "Standard_llama"

}

},

"ModelDeployParam" :

{

"engineName" : "mindieservice_llm_engine",

"modelInstanceNumber" : 1,

"tokenizerProcessNumber" : 8,

"maxSeqLen" : 2560,

"npuDeviceIds" : [[0]],

"multiNodesInferEnabled" : false,

"ModelParam" : [

{

"modelInstanceType" : "Standard",

"modelName" : "qwen",

"modelWeightPath" : "/home/apulis-dev/teamdata/qwen2.5-72B-Instruct",

"worldSize" : 1,

"cpuMemSize" : 5,

"npuMemSize" : 8,

"backendType" : "atb",

"pluginParams" : ""

}

]

},

"ScheduleParam" :

{

"maxPrefillBatchSize" : 8,

"maxPrefillTokens" : 8192,

"prefillTimeMsPerReq" : 150,

"prefillPolicyType" : 0,

"decodeTimeMsPerReq" : 50,

"decodePolicyType" : 0,

"maxBatchSize" : 8,

"maxIterTimes" : 512,

"maxPreemptCount" : 0,

"supportSelectBatch" : false,

"maxQueueDelayMicroseconds" : 5000

}

}

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# ll

total 60

drwxr-x--- 1 root root 4096 Nov 7 12:18 ./

drwxr-x--- 1 root root 4096 Aug 31 00:59 ../

dr-xr-x--- 2 root root 4096 Aug 31 00:59 bin/

drwxr-x--- 1 root root 4096 Nov 7 10:54 conf/

drwxr-x--- 3 root root 4096 Aug 31 00:59 include/

drwxr-x--- 2 root root 4096 Aug 31 00:59 lib/

drwx------ 1 root root 4096 Nov 7 12:18 logs/

drwx------ 3 root root 4096 Aug 31 00:59 scripts/

drwx------ 6 root root 4096 Aug 31 00:59 security/

-r-xr-x--- 1 root root 1654 Aug 31 00:59 set_env.sh*

-r--r----- 1 root root 100 Aug 31 00:59 version.info

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service#

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service#

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service#

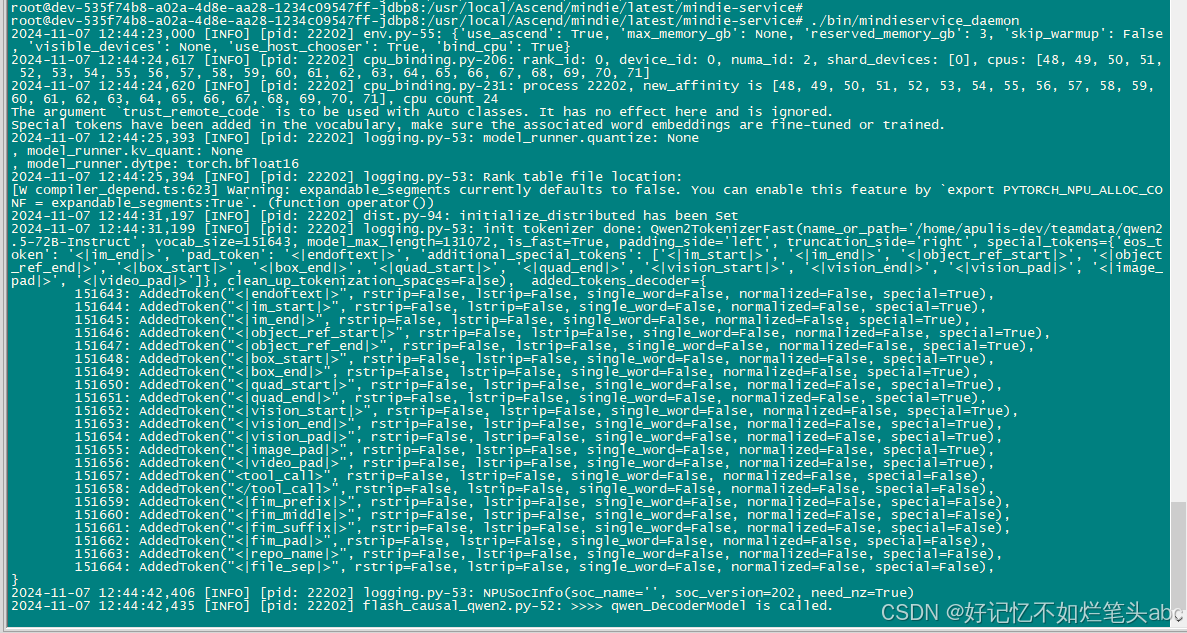

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# ./bin/mindieservice_daemon

2024-11-07 12:44:23,000 [INFO] [pid: 22202] env.py-55: {'use_ascend': True, 'max_memory_gb': None, 'reserved_memory_gb': 3, 'skip_warmup': False, 'visible_devices': None, 'use_host_chooser': True, 'bind_cpu': True}

2024-11-07 12:44:24,617 [INFO] [pid: 22202] cpu_binding.py-206: rank_id: 0, device_id: 0, numa_id: 2, shard_devices: [0], cpus: [48, 49, 50, 51, 52, 53, 54, 55, 56, 57, 58, 59, 60, 61, 62, 63, 64, 65, 66, 67, 68, 69, 70, 71]

2024-11-07 12:44:24,620 [INFO] [pid: 22202] cpu_binding.py-231: process 22202, new_affinity is [48, 49, 50, 51, 52, 53, 54, 55, 56, 57, 58, 59, 60, 61, 62, 63, 64, 65, 66, 67, 68, 69, 70, 71], cpu count 24

The argument `trust_remote_code` is to be used with Auto classes. It has no effect here and is ignored.

Special tokens have been added in the vocabulary, make sure the associated word embeddings are fine-tuned or trained.

2024-11-07 12:44:25,393 [INFO] [pid: 22202] logging.py-53: model_runner.quantize: None

, model_runner.kv_quant: None

, model_runner.dytpe: torch.bfloat16

2024-11-07 12:44:25,394 [INFO] [pid: 22202] logging.py-53: Rank table file location:

[W compiler_depend.ts:623] Warning: expandable_segments currently defaults to false. You can enable this feature by `export PYTORCH_NPU_ALLOC_CONF = expandable_segments:True`. (function operator())

2024-11-07 12:44:31,197 [INFO] [pid: 22202] dist.py-94: initialize_distributed has been Set

2024-11-07 12:44:31,199 [INFO] [pid: 22202] logging.py-53: init tokenizer done: Qwen2TokenizerFast(name_or_path='/home/apulis-dev/teamdata/qwen2.5-72B-Instruct', vocab_size=151643, model_max_length=131072, is_fast=True, padding_side='left', truncation_side='right', special_tokens={'eos_token': '<|im_end|>', 'pad_token': '<|endoftext|>', 'additional_special_tokens': ['<|im_start|>', '<|im_end|>', '<|object_ref_start|>', '<|object_ref_end|>', '<|box_start|>', '<|box_end|>', '<|quad_start|>', '<|quad_end|>', '<|vision_start|>', '<|vision_end|>', '<|vision_pad|>', '<|image_pad|>', '<|video_pad|>']}, clean_up_tokenization_spaces=False), added_tokens_decoder={

151643: AddedToken("<|endoftext|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151644: AddedToken("<|im_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151645: AddedToken("<|im_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151646: AddedToken("<|object_ref_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151647: AddedToken("<|object_ref_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151648: AddedToken("<|box_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151649: AddedToken("<|box_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151650: AddedToken("<|quad_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151651: AddedToken("<|quad_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151652: AddedToken("<|vision_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151653: AddedToken("<|vision_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151654: AddedToken("<|vision_pad|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151655: AddedToken("<|image_pad|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151656: AddedToken("<|video_pad|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151657: AddedToken("<tool_call>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151658: AddedToken("</tool_call>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151659: AddedToken("<|fim_prefix|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151660: AddedToken("<|fim_middle|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151661: AddedToken("<|fim_suffix|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151662: AddedToken("<|fim_pad|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151663: AddedToken("<|repo_name|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151664: AddedToken("<|file_sep|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

}

2024-11-07 12:44:42,406 [INFO] [pid: 22202] logging.py-53: NPUSocInfo(soc_name='', soc_version=202, need_nz=True)

2024-11-07 12:44:42,435 [INFO] [pid: 22202] flash_causal_qwen2.py-52: >>>> qwen_DecoderModel is called.

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest# cd /usr/local/Ascend/llm_model

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/llm_model# ll

total 392

drwxr-xr-x 1 root root 4096 Nov 7 12:14 ./

drwxr-xr-x 1 root root 4096 Aug 31 00:59 ../

-rw-r--r-- 1 root root 20514 Jul 22 15:42 README.md

drwxr-xr-x 1 root root 4096 Jul 22 15:42 atb_llm/

-rw-r--r-- 1 root root 308196 Jul 22 15:42 atb_llm-0.0.1-py3-none-any.whl

drwxr-xr-x 1 root root 4096 Nov 7 11:20 examples/

drwxr-xr-x 2 root root 4096 Jul 22 15:42 lib/

-rw-r--r-- 1 root root 4426 Jul 22 15:42 public_address_statement.md

drwxr-xr-x 3 root root 4096 Jul 22 15:42 requirements/

-rw-r--r-- 1 root root 2156 Jul 22 15:38 set_env.sh

-rw-r--r-- 1 root root 461 Jul 22 15:42 setup.py

drwxr-xr-x 3 root root 4096 Aug 31 00:59 tests/

-rw-r--r-- 1 root root 180 Jul 22 15:42 version.info

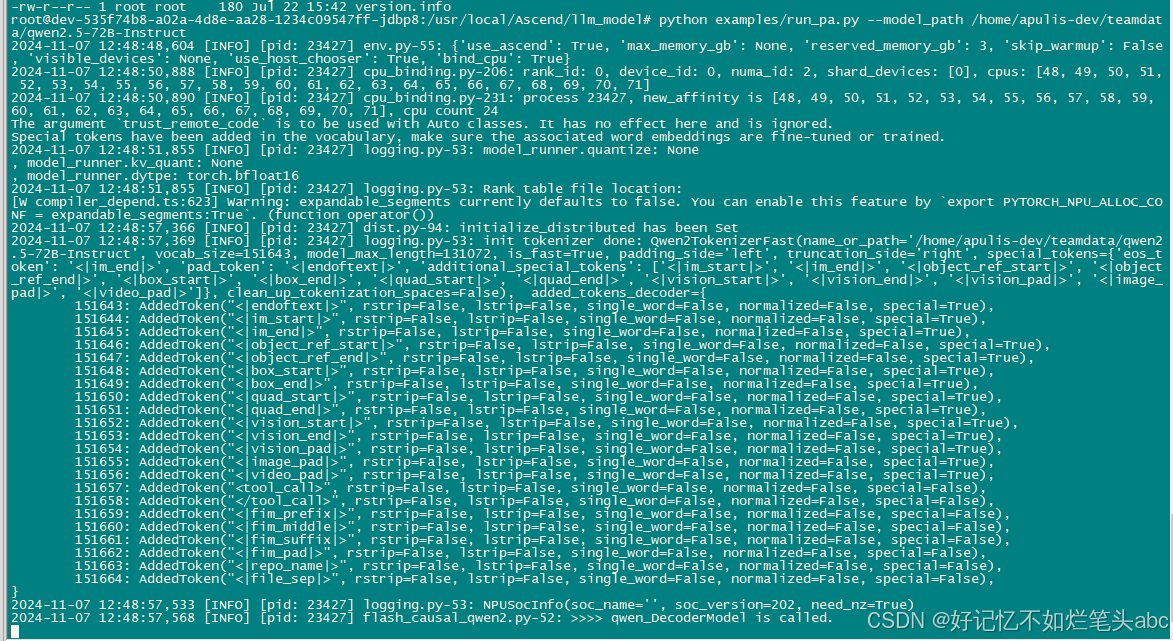

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/llm_model# python examples/run_pa.py --model_path /home/apulis-dev/teamdata/qwen2.5-72B-Instruct

2024-11-07 12:48:48,604 [INFO] [pid: 23427] env.py-55: {'use_ascend': True, 'max_memory_gb': None, 'reserved_memory_gb': 3, 'skip_warmup': False, 'visible_devices': None, 'use_host_chooser': True, 'bind_cpu': True}

2024-11-07 12:48:50,888 [INFO] [pid: 23427] cpu_binding.py-206: rank_id: 0, device_id: 0, numa_id: 2, shard_devices: [0], cpus: [48, 49, 50, 51, 52, 53, 54, 55, 56, 57, 58, 59, 60, 61, 62, 63, 64, 65, 66, 67, 68, 69, 70, 71]

2024-11-07 12:48:50,890 [INFO] [pid: 23427] cpu_binding.py-231: process 23427, new_affinity is [48, 49, 50, 51, 52, 53, 54, 55, 56, 57, 58, 59, 60, 61, 62, 63, 64, 65, 66, 67, 68, 69, 70, 71], cpu count 24

The argument `trust_remote_code` is to be used with Auto classes. It has no effect here and is ignored.

Special tokens have been added in the vocabulary, make sure the associated word embeddings are fine-tuned or trained.

2024-11-07 12:48:51,855 [INFO] [pid: 23427] logging.py-53: model_runner.quantize: None

, model_runner.kv_quant: None

, model_runner.dytpe: torch.bfloat16

2024-11-07 12:48:51,855 [INFO] [pid: 23427] logging.py-53: Rank table file location:

[W compiler_depend.ts:623] Warning: expandable_segments currently defaults to false. You can enable this feature by `export PYTORCH_NPU_ALLOC_CONF = expandable_segments:True`. (function operator())

2024-11-07 12:48:57,366 [INFO] [pid: 23427] dist.py-94: initialize_distributed has been Set

2024-11-07 12:48:57,369 [INFO] [pid: 23427] logging.py-53: init tokenizer done: Qwen2TokenizerFast(name_or_path='/home/apulis-dev/teamdata/qwen2.5-72B-Instruct', vocab_size=151643, model_max_length=131072, is_fast=True, padding_side='left', truncation_side='right', special_tokens={'eos_token': '<|im_end|>', 'pad_token': '<|endoftext|>', 'additional_special_tokens': ['<|im_start|>', '<|im_end|>', '<|object_ref_start|>', '<|object_ref_end|>', '<|box_start|>', '<|box_end|>', '<|quad_start|>', '<|quad_end|>', '<|vision_start|>', '<|vision_end|>', '<|vision_pad|>', '<|image_pad|>', '<|video_pad|>']}, clean_up_tokenization_spaces=False), added_tokens_decoder={

151643: AddedToken("<|endoftext|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151644: AddedToken("<|im_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151645: AddedToken("<|im_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151646: AddedToken("<|object_ref_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151647: AddedToken("<|object_ref_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151648: AddedToken("<|box_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151649: AddedToken("<|box_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151650: AddedToken("<|quad_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151651: AddedToken("<|quad_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151652: AddedToken("<|vision_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151653: AddedToken("<|vision_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151654: AddedToken("<|vision_pad|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151655: AddedToken("<|image_pad|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151656: AddedToken("<|video_pad|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151657: AddedToken("<tool_call>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151658: AddedToken("</tool_call>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151659: AddedToken("<|fim_prefix|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151660: AddedToken("<|fim_middle|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151661: AddedToken("<|fim_suffix|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151662: AddedToken("<|fim_pad|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151663: AddedToken("<|repo_name|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151664: AddedToken("<|file_sep|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

}

2024-11-07 12:48:57,533 [INFO] [pid: 23427] logging.py-53: NPUSocInfo(soc_name='', soc_version=202, need_nz=True)

2024-11-07 12:48:57,568 [INFO] [pid: 23427] flash_causal_qwen2.py-52: >>>> qwen_DecoderModel is called.

后台方式启动:

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest# cd mindie-service/

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# ll

total 64

drwxr-x--- 1 root root 4096 Nov 7 12:44 ./

drwxr-x--- 1 root root 4096 Aug 31 00:59 ../

dr-xr-x--- 2 root root 4096 Aug 31 00:59 bin/

drwxr-x--- 1 root root 4096 Nov 7 10:54 conf/

drwxr-x--- 3 root root 4096 Aug 31 00:59 include/

drwxr-x--- 3 root root 4096 Nov 7 12:44 kernel_meta/

drwxr-x--- 2 root root 4096 Aug 31 00:59 lib/

drwx------ 1 root root 4096 Nov 7 12:44 logs/

drwx------ 3 root root 4096 Aug 31 00:59 scripts/

drwx------ 6 root root 4096 Aug 31 00:59 security/

-r-xr-x--- 1 root root 1654 Aug 31 00:59 set_env.sh*

-r--r----- 1 root root 100 Aug 31 00:59 version.info

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# nohup ./bin/mindieservice_daemon > output.log 2>&1 &

[2] 25894

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service#

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service#

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service#

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service#

root@dev-535f74b8-a02a-4d8e-aa28-1234c09547ff-jdbp8:/usr/local/Ascend/mindie/latest/mindie-service# tail -f output.log

nohup: ignoring input

2024-11-07 13:01:03,735 [INFO] [pid: 25918] env.py-55: {'use_ascend': True, 'max_memory_gb': None, 'reserved_memory_gb': 3, 'skip_warmup': False, 'visible_devices': None, 'use_host_chooser': True, 'bind_cpu': True}

2024-11-07 13:01:05,385 [INFO] [pid: 25918] cpu_binding.py-206: rank_id: 0, device_id: 0, numa_id: 2, shard_devices: [0], cpus: [48, 49, 50, 51, 52, 53, 54, 55, 56, 57, 58, 59, 60, 61, 62, 63, 64, 65, 66, 67, 68, 69, 70, 71]

2024-11-07 13:01:05,387 [INFO] [pid: 25918] cpu_binding.py-231: process 25918, new_affinity is [48, 49, 50, 51, 52, 53, 54, 55, 56, 57, 58, 59, 60, 61, 62, 63, 64, 65, 66, 67, 68, 69, 70, 71], cpu count 24

The argument `trust_remote_code` is to be used with Auto classes. It has no effect here and is ignored.

Special tokens have been added in the vocabulary, make sure the associated word embeddings are fine-tuned or trained.

2024-11-07 13:01:06,122 [INFO] [pid: 25918] logging.py-53: model_runner.quantize: None

, model_runner.kv_quant: None

, model_runner.dytpe: torch.bfloat16

2024-11-07 13:01:06,122 [INFO] [pid: 25918] logging.py-53: Rank table file location:

[W compiler_depend.ts:623] Warning: expandable_segments currently defaults to false. You can enable this feature by `export PYTORCH_NPU_ALLOC_CONF = expandable_segments:True`. (function operator())

2024-11-07 13:01:11,742 [INFO] [pid: 25918] dist.py-94: initialize_distributed has been Set

2024-11-07 13:01:11,744 [INFO] [pid: 25918] logging.py-53: init tokenizer done: Qwen2TokenizerFast(name_or_path='/home/apulis-dev/teamdata/qwen2.5-72B-Instruct', vocab_size=151643, model_max_length=131072, is_fast=True, padding_side='left', truncation_side='right', special_tokens={'eos_token': '<|im_end|>', 'pad_token': '<|endoftext|>', 'additional_special_tokens': ['<|im_start|>', '<|im_end|>', '<|object_ref_start|>', '<|object_ref_end|>', '<|box_start|>', '<|box_end|>', '<|quad_start|>', '<|quad_end|>', '<|vision_start|>', '<|vision_end|>', '<|vision_pad|>', '<|image_pad|>', '<|video_pad|>']}, clean_up_tokenization_spaces=False), added_tokens_decoder={

151643: AddedToken("<|endoftext|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151644: AddedToken("<|im_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151645: AddedToken("<|im_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151646: AddedToken("<|object_ref_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151647: AddedToken("<|object_ref_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151648: AddedToken("<|box_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151649: AddedToken("<|box_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151650: AddedToken("<|quad_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151651: AddedToken("<|quad_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151652: AddedToken("<|vision_start|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151653: AddedToken("<|vision_end|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151654: AddedToken("<|vision_pad|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151655: AddedToken("<|image_pad|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151656: AddedToken("<|video_pad|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=True),

151657: AddedToken("<tool_call>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151658: AddedToken("</tool_call>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151659: AddedToken("<|fim_prefix|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151660: AddedToken("<|fim_middle|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151661: AddedToken("<|fim_suffix|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151662: AddedToken("<|fim_pad|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151663: AddedToken("<|repo_name|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

151664: AddedToken("<|file_sep|>", rstrip=False, lstrip=False, single_word=False, normalized=False, special=False),

}

2024-11-07 13:01:11,942 [INFO] [pid: 25918] logging.py-53: NPUSocInfo(soc_name='', soc_version=202, need_nz=True)

2024-11-07 13:01:11,967 [INFO] [pid: 25918] flash_causal_qwen2.py-52: >>>> qwen_DecoderModel is called.

检查日志报错:

单卡显存不足:

RuntimeError: NPU out of memory. Tried to allocate 464.00 MiB (NPU 0; 21.02 GiB total capacity; 18.90 GiB already allocated; 18.90 GiB current active; 887.61 MiB free; 18.91 GiB reserved in total by PyTorch) If reserved memory is >> allocated memory try sett

ing max_split_size_mb to avoid fragmentation.

2024-11-07 14:53:55,195 [ERROR] model.py:33 - [Model] >>> return initialize error result: {'status': 'error', 'npuBlockNum': '0', 'cpuBlockNum': '0'}

root@dev-8242526b-01f2-4a54-b89d-f6d9c57c692d-qjhpf:/usr/local/Ascend/mindie/latest/mindie-service/logs# ll

total 96

drwx------ 1 root root 4096 Nov 7 15:36 ./

drwxr-x--- 1 root root 4096 Nov 7 15:36 ../

-rw-r----- 1 root root 16278 Nov 7 15:36 mindie_audit.log

-rw-r----- 1 root root 16620 Nov 7 15:36 mindservice.log

-rw-r--r-- 1 root root 2079 Nov 7 12:18 pythonlog.log.17538

-rw-r--r-- 1 root root 1763 Nov 7 13:41 pythonlog.log.1761

-rw-r--r-- 1 root root 0 Nov 7 12:44 pythonlog.log.22202

-rw-r--r-- 1 root root 1763 Nov 7 13:43 pythonlog.log.2366

-rw-r--r-- 1 root root 1763 Nov 7 11:00 pythonlog.log.2379

-rw-r--r-- 1 root root 0 Nov 7 13:00 pythonlog.log.25918

-rw-r--r-- 1 root root 0 Nov 7 13:06 pythonlog.log.27337

-rw-r--r-- 1 root root 0 Nov 7 13:17 pythonlog.log.30201

-rw-r--r-- 1 root root 1763 Nov 7 13:47 pythonlog.log.3124

-rw-r--r-- 1 root root 3313 Nov 7 14:53 pythonlog.log.33308

-rw-r--r-- 1 root root 1763 Nov 7 11:11 pythonlog.log.4336

-rw-r--r-- 1 root root 3313 Nov 7 15:17 pythonlog.log.45779

-rw-r--r-- 1 root root 2079 Nov 7 11:14 pythonlog.log.5615

-rw-r--r-- 1 root root 2351 Nov 7 15:32 pythonlog.log.65298

-rw-r--r-- 1 root root 150 Nov 7 14:06 pythonlog.log.6647

-rw-r--r-- 1 root root 0 Nov 7 15:36 pythonlog.log.69882

鲲鹏昇腾开发者社区是面向全社会开放的“联接全球计算开发者,聚合华为+生态”的社区,内容涵盖鲲鹏、昇腾资源,帮助开发者快速获取所需的知识、经验、软件、工具、算力,支撑开发者易学、好用、成功,成为核心开发者。

更多推荐

已为社区贡献6条内容

已为社区贡献6条内容

所有评论(0)