昇思25天学习打卡营第7天|模型训练、模型保存与加载

接口直接将模型保存为MindIR,同时保存了Checkpoint和模型结构,因此需要定义输入Tensor来获取输入shape。同样,保存与加载在第一章中也介绍过,熟悉下新的写法。

·

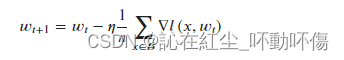

本章主要介绍模型的训练、保存和加载,在第一章中已经学习过,主要是熟悉新的写法,没有其他特殊的处理,核心内容是实现随机梯度下降算法:

模型训练:

模型训练一般分为四个步骤:

- 构建数据集。

- 定义神经网络模型。

- 定义超参、损失函数及优化器。

- 输入数据集进行训练与评估。

构建数据集:

import mindspore

from mindspore import nn

from mindspore.dataset import vision, transforms

from mindspore.dataset import MnistDataset

# Download data from open datasets

from download import download

url = "https://mindspore-website.obs.cn-north-4.myhuaweicloud.com/" \

"notebook/datasets/MNIST_Data.zip"

path = download(url, "./", kind="zip", replace=True)

def datapipe(path, batch_size):

image_transforms = [

vision.Rescale(1.0 / 255.0, 0),

vision.Normalize(mean=(0.1307,), std=(0.3081,)),

vision.HWC2CHW()

]

label_transform = transforms.TypeCast(mindspore.int32)

dataset = MnistDataset(path)

dataset = dataset.map(image_transforms, 'image')

dataset = dataset.map(label_transform, 'label')

dataset = dataset.batch(batch_size)

return dataset

train_dataset = datapipe('MNIST_Data/train', batch_size=64)

test_dataset = datapipe('MNIST_Data/test', batch_size=64)

定义神经网络模型:

class Network(nn.Cell):

def __init__(self):

super().__init__()

self.flatten = nn.Flatten()

self.dense_relu_sequential = nn.SequentialCell(

nn.Dense(28*28, 512),

nn.ReLU(),

nn.Dense(512, 512),

nn.ReLU(),

nn.Dense(512, 10)

)

def construct(self, x):

x = self.flatten(x)

logits = self.dense_relu_sequential(x)

return logits

model = Network()定义超参、损失函数和优化器:

## 超参

epochs = 3 # 训练轮次

batch_size = 64 # 批次大小

learning_rate = 1e-2 # 学习率

## 损失函数

loss_fn = nn.CrossEntropyLoss()

## 优化器

optimizer = nn.SGD(model.trainable_params(), learning_rate=learning_rate)训练与评估:

## 定义前向传播

def forward_fn(data, label):

logits = model(data)

loss = loss_fn(logits, label)

return loss, logits

## 自微分函数

grad_fn = mindspore.value_and_grad(forward_fn, None, optimizer.parameters, has_aux=True)

## 定义一步训练

def train_step(data, label):

(loss, _), grads = grad_fn(data, label)

optimizer(grads)

return loss

## 训练轮训

def train_loop(model, dataset):

size = dataset.get_dataset_size()

model.set_train()

for batch, (data, label) in enumerate(dataset.create_tuple_iterator()):

loss = train_step(data, label)

if batch % 100 == 0:

loss, current = loss.asnumpy(), batch

print(f"loss: {loss:>7f} [{current:>3d}/{size:>3d}]")

## 测试轮训

def test_loop(model, dataset, loss_fn):

num_batches = dataset.get_dataset_size()

model.set_train(False)

total, test_loss, correct = 0, 0, 0

for data, label in dataset.create_tuple_iterator():

pred = model(data)

total += len(data)

test_loss += loss_fn(pred, label).asnumpy()

correct += (pred.argmax(1) == label).asnumpy().sum()

test_loss /= num_batches

correct /= total

print(f"Test: \n Accuracy: {(100*correct):>0.1f}%, Avg loss: {test_loss:>8f} \n")

loss_fn = nn.CrossEntropyLoss()

optimizer = nn.SGD(model.trainable_params(), learning_rate=learning_rate)

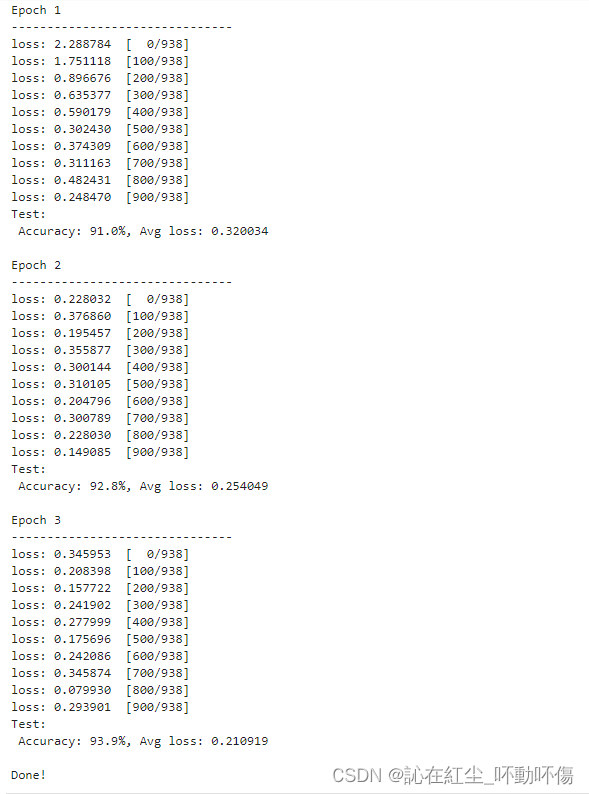

for t in range(epochs):

print(f"Epoch {t+1}\n-------------------------------")

train_loop(model, train_dataset)

test_loop(model, test_dataset, loss_fn)

print("Done!")

保存与加载:

同样,保存与加载在第一章中也介绍过,熟悉下新的写法。

import numpy as np

import mindspore

from mindspore import nn

from mindspore import Tensor

def network():

model = nn.SequentialCell(

nn.Flatten(),

nn.Dense(28*28, 512),

nn.ReLU(),

nn.Dense(512, 512),

nn.ReLU(),

nn.Dense(512, 10))

return model

## 保存模型

model = network()

mindspore.save_checkpoint(model, "model.ckpt")

## 实例化和加载模型

model = network()

param_dict = mindspore.load_checkpoint("model.ckpt")

param_not_load, _ = mindspore.load_param_into_net(model, param_dict)

print(param_not_load)![]()

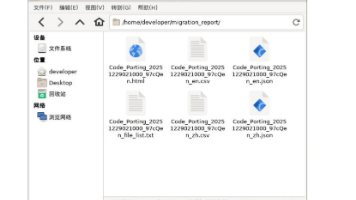

保存和加载MindIR:

可使用export接口直接将模型保存为MindIR,同时保存了Checkpoint和模型结构,因此需要定义输入Tensor来获取输入shape。

model = network()

inputs = Tensor(np.ones([1, 1, 28, 28]).astype(np.float32))

mindspore.export(model, inputs, file_name="model", file_format="MINDIR")

mindspore.set_context(mode=mindspore.GRAPH_MODE)

graph = mindspore.load("model.mindir")

model = nn.GraphCell(graph) # 仅支持图模式

outputs = model(inputs)

print(outputs.shape)

鲲鹏昇腾开发者社区是面向全社会开放的“联接全球计算开发者,聚合华为+生态”的社区,内容涵盖鲲鹏、昇腾资源,帮助开发者快速获取所需的知识、经验、软件、工具、算力,支撑开发者易学、好用、成功,成为核心开发者。

更多推荐

已为社区贡献17条内容

已为社区贡献17条内容

所有评论(0)