昇思25天学习打卡营第14天|CYCLEGAN

【代码】昇思25天学习打卡营第13天|DCGAN。

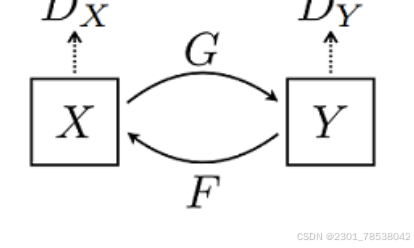

The cycle GAN means to transit the style with learning how to generate another kind of style.

For X we have one style,

For Y we have another,

so for get a picture of Y object with X's style, we just need to learn how to generate the style of X.

The function of loss is period_consistent, you have a X, you use it to generate a X' of Y style, and we then use this X' to generate another X'' of X style. X and X'' are the same style accordingly, and we can compute their cycle_consistency_loss

we show the discriminator here,

class Discriminator(nn.Cell):

def __init__(self, input_channel = 3, output_channel=64, n_layers= 3, alpha=0.2,norm_mode = 'instance'):

super(Discriminator, self).__init__()

kernel_size = 4

layers = [nn.Conv2d(input_channel, output_channel, kernel_size,2,pad_mode='pad', padding= 1, weight_init = weight_init),nn.LeakyReLU(alpha)]

nf_mult = output_channel

for i in range(1, n_layers):

nf_mult_prev = nf_mult

nf_mult = min(2**i, 8) * output_channel

layers.append(ConvNormReLU(nf_mult_prev,nf_mult, kernel_size, 2, alpha, norm_mode, padding = 1))

nf_mult_prev = nf_mult

nf_mult = min(2**n_layers, 8) * output_channel

layers.append(ConvNormReLU(nf_mult_prev, nf_mult, kernel_size, 1, alpha, norm_mode, padding=1))

layers.append(nn.Conv2d(nf_mult, 1, kernel_size, 1, pad_mode="pad", padding = 1, weight_init= weight_init))

self.features = nn.SequentialCell(layers)

def construct(self,x):

output = self.features(x)

return output

net_d_a = Discriminator()

net_d_a.update_parameters_name('net_d_a.')

net_d_b = Discriminator()

net_d_b.update_parameters_name('net_d_b')

to get a better understanding of Generator, we skim the sketch first:

3*256*256 -- CNR(CONV-NORM-RELU) 64, 7*7 -- CNR,upsamp,128,3*3 -- CNR upsamp, 256,3*3 -- ResidualBlock*9 , 256,3*3 -- CTNR(CONV,TRANSPOSE, NORMRELU) 128,3*3 -- CTNR 128, 3*3,--- CONV 3,7*7

class ResNetGenerator(nn.Cell):

def __init__(self, input_channel=3, output_channel=64, n_layers = 9, alpha =0.2, norm_mode='instance', dropout = False, pad_mode = 'CONSTANT'):

super(ResNetGenerator, self).__init__()

self.conv_in = ConvNormReLU(input_channel,output_channel,7,1, alpha, norm_mode, pad_mode = pad_mode)

self.down_1 = ConvNormReLU(output_channel, output_channel*2, 3, 2, alpha, norm_mode)

self.down_2 = ConvNormReLU(output_channel*2, output_channel*4,3,2,alpha, norm_mode)

layers = [ResidualBlock(output_channel *4, norm_mode, dropout=dropout, pad_mode=pad_mode)] * n_layers

self.residuals = nn.SequentialCell(layers)

self.up_2 = ConvNormReLU(output_channel * 4, output_channel*2, 3,2,alpha,norm_mode, transpose = True)

self.up_1 = ConvNormReLU(output_channel*2 ,output_channel, 3,2,alpha,norm_mode, transpose = True)

if pad_mode =='CONSTANT':

self.conv_out = nn.Conv2d(output_channel, 3, kernel_size = 7,stride =1, pad_mode = 'pad',padding = 3,weight_init= weight_init)

else :

pad = nn.Pad(paddings = ((0,0),(0,0),(3,3),(3,3)),mode=pad_mode)

conv = nn.Conv2d(output_channel,3,kernel_size=7,stride = 1, pad_mode = 'pad',weight_init = weight_init)

self.conv_out = nn.SequentialCell([pad, conv])

def construct(self, x):

x = self.conv_in(x)

x = self.down_1(x)

x= self.down_2(x)

x = self.residuals(x)

x = self.up_2(x)

x = self.up_1(x)

output = self.conv_out(x)

return ops.tanh(output)

net_rg_a = ResNetGenerator()

net_rg_a.update_parameters_name('net_rg_a.')

net_rg_b = ResNetGenerator()

net_rg_b.update_parameters_name('net_rg_b.')

鲲鹏昇腾开发者社区是面向全社会开放的“联接全球计算开发者,聚合华为+生态”的社区,内容涵盖鲲鹏、昇腾资源,帮助开发者快速获取所需的知识、经验、软件、工具、算力,支撑开发者易学、好用、成功,成为核心开发者。

更多推荐

已为社区贡献23条内容

已为社区贡献23条内容

所有评论(0)