05 基于MindSpore的GPT2实现文本摘要

本案例默认在GPU P100上运行,因中文文本,tokenizer使用的是bert tokenizer而非gpt tokenizer等原因,全量数据训练1个epoch的时间约为80分钟。为节约时间,我们选取了数据集中很小的一个子集(500条数据)来演示gpt2的微调和推理全流程,但由于数据量不足,会导致模型效果较差。由于训练数据量少,epochs数少且tokenizer并未使用gpt tokeni

环境配置

- 配置python3.9环境

#安装mindnlp 0.4.0套件

!pip install mindnlp==0.4.0

!pip uninstall soundfile -y

!pip install https://ms-release.obs.cn-north-4.myhuaweicloud.com/2.3.1/MindSpore/unified/aarch64/mindspore-2.3.1-cp39-cp39-linux_aarch64.whl --trusted-host ms-release.obs.cn-north-4.myhuaweicloud.com -i https://pypi.tuna.tsinghua.edu.cn/simple

数据集加载与处理

- 数据集加载

本次实验使用的是nlpcc2017摘要数据,内容为新闻正文及其摘要,总计50000个样本。

from mindnlp.utils import http_get

# download dataset

url = 'https://download.mindspore.cn/toolkits/mindnlp/dataset/text_generation/nlpcc2017/train_with_summ.txt'

path = http_get(url, './')

from mindspore.dataset import TextFileDataset

# load dataset

dataset = TextFileDataset(str(path), shuffle=False)

dataset.get_dataset_size()

本案例默认在GPU P100上运行,因中文文本,tokenizer使用的是bert tokenizer而非gpt tokenizer等原因,全量数据训练1个epoch的时间约为80分钟。

为节约时间,我们选取了数据集中很小的一个子集(500条数据)来演示gpt2的微调和推理全流程,但由于数据量不足,会导致模型效果较差。

# split into training and testing dataset

mini_dataset, _ = dataset.split([0.001, 0.999], randomize=False)

train_dataset, test_dataset = mini_dataset.split([0.9, 0.1], randomize=False)

- 数据预处理

原始数据格式:

article: [CLS] article_context [SEP]

summary: [CLS] summary_context [SEP]

预处理后的数据格式:

[CLS] article_context [SEP] summary_context [SEP]

import json

import numpy as np

# preprocess dataset

def process_dataset(dataset, tokenizer, batch_size=4, max_seq_len=1024, shuffle=False):

def read_map(text):

data = json.loads(text.tobytes())

return np.array(data['article']), np.array(data['summarization'])

def merge_and_pad(article, summary):

# tokenization

# pad to max_seq_length, only truncate the article

tokenized = tokenizer(text=article, text_pair=summary,

padding='max_length', truncation='only_first', max_length=max_seq_len)

return tokenized['input_ids'], tokenized['input_ids']

dataset = dataset.map(read_map, 'text', ['article', 'summary'])

# change column names to input_ids and labels for the following training

dataset = dataset.map(merge_and_pad, ['article', 'summary'], ['input_ids', 'labels'])

dataset = dataset.batch(batch_size)

if shuffle:

dataset = dataset.shuffle(batch_size)

return dataset

因GPT2无中文的tokenizer,我们使用BertTokenizer替代。

from mindnlp.transformers import BertTokenizer

# We use BertTokenizer for tokenizing chinese context.

tokenizer = BertTokenizer.from_pretrained('bert-base-chinese')

len(tokenizer)

train_dataset = process_dataset(train_dataset, tokenizer, batch_size=1)

next(train_dataset.create_tuple_iterator())

模型构建

- 构建GPT2ForSummarization模型,注意shift right的操作。

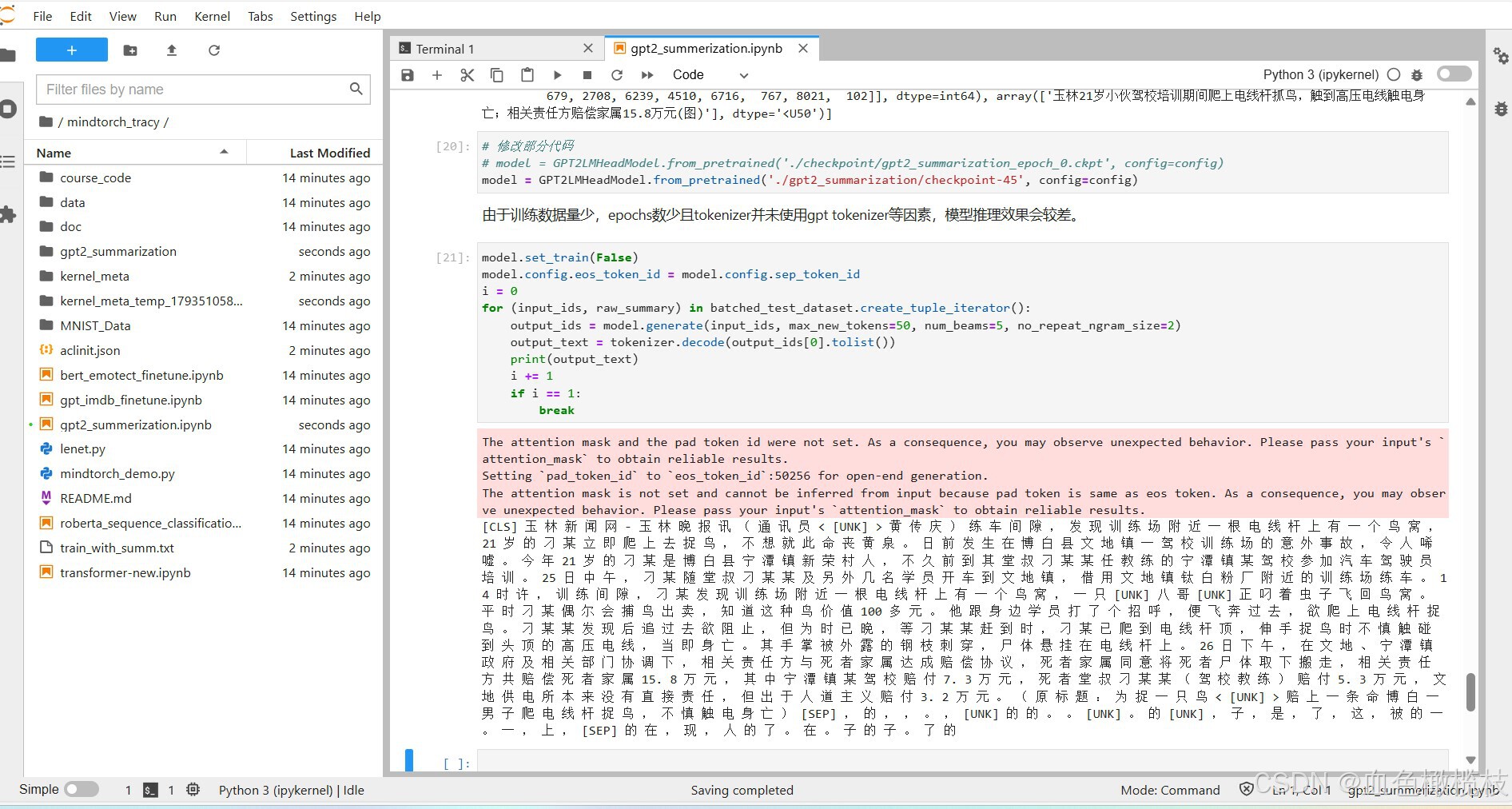

# 修改部分代码

# from mindspore import ops

# from mindnlp.transformers import GPT2LMHeadModel

# class GPT2ForSummarization(GPT2LMHeadModel):

# def construct(

# self,

# input_ids = None,

# attention_mask = None,

# labels = None,

# ):

# outputs = super().construct(input_ids=input_ids, attention_mask=attention_mask)

# shift_logits = outputs.logits[..., :-1, :]

# shift_labels = labels[..., 1:]

# # Flatten the tokens

# loss = ops.cross_entropy(shift_logits.view(-1, shift_logits.shape[-1]), shift_labels.view(-1), ignore_index=tokenizer.pad_token_id)

# return loss

from mindnlp.core.nn import functional as F

from mindnlp.transformers import GPT2LMHeadModel

class GPT2ForSummarization(GPT2LMHeadModel):

def forward(

self,

input_ids = None,

attention_mask = None,

labels = None,

):

outputs = super().forward(input_ids=input_ids, attention_mask=attention_mask)

shift_logits = outputs.logits[..., :-1, :]

shift_labels = labels[..., 1:]

# Flatten the tokens

loss = F.cross_entropy(shift_logits.view(-1, shift_logits.shape[-1]), shift_labels.view(-1), ignore_index=tokenizer.pad_token_id)

return (loss,)

- 动态学习率

# 去除了动态学习率

# from mindspore import ops

# from mindspore.nn.learning_rate_schedule import LearningRateSchedule

# class LinearWithWarmUp(LearningRateSchedule):

# """

# Warmup-decay learning rate.

# """

# def __init__(self, learning_rate, num_warmup_steps, num_training_steps):

# super().__init__()

# self.learning_rate = learning_rate

# self.num_warmup_steps = num_warmup_steps

# self.num_training_steps = num_training_steps

# def construct(self, global_step):

# if global_step < self.num_warmup_steps:

# return global_step / float(max(1, self.num_warmup_steps)) * self.learning_rate

# return ops.maximum(

# 0.0, (self.num_training_steps - global_step) / (max(1, self.num_training_steps - self.num_warmup_steps))

# ) * self.learning_rate

模型训练

num_epochs = 1

warmup_steps = 100

learning_rate = 1.5e-4

max_grad_norm = 1.0

num_training_steps = num_epochs * train_dataset.get_dataset_size()

from mindspore import nn

from mindnlp.transformers import GPT2Config, GPT2LMHeadModel

config = GPT2Config(vocab_size=len(tokenizer))

model = GPT2ForSummarization(config)

# 修改部分代码

# lr_scheduler = LinearWithWarmUp(learning_rate=learning_rate, num_warmup_steps=warmup_steps, num_training_steps=num_training_steps)

# optimizer = nn.AdamWeightDecay(model.trainable_params(), learning_rate=lr_scheduler)

# optimizer = nn.AdamWeightDecay(model.trainable_params(), learning_rate=learning_rate)

# 记录模型参数数量

print('number of model parameters: {}'.format(model.num_parameters()))

# 修改部分代码

# from mindnlp._legacy.engine import Trainer

# from mindnlp._legacy.engine.callbacks import CheckpointCallback

# ckpoint_cb = CheckpointCallback(save_path='checkpoint', ckpt_name='gpt2_summarization',

# epochs=1, keep_checkpoint_max=2)

# trainer = Trainer(network=model, train_dataset=train_dataset,

# epochs=num_epochs, optimizer=optimizer, callbacks=ckpoint_cb)

# trainer.set_amp(level='O1') # 开启混合精度

from mindnlp.engine import TrainingArguments

training_args = TrainingArguments(

output_dir="gpt2_summarization",

save_steps=train_dataset.get_dataset_size(),

save_total_limit=3,

logging_steps=1000,

max_steps=num_training_steps,

learning_rate=learning_rate,

max_grad_norm=max_grad_norm,

warmup_steps=warmup_steps

)

from mindnlp.engine import Trainer

trainer = Trainer(

model=model,

args=training_args,

train_dataset=train_dataset,

)

# 修改部分代码

# trainer.run(tgt_columns="labels")

trainer.train()

def process_test_dataset(dataset, tokenizer, batch_size=1, max_seq_len=1024, max_summary_len=100):

def read_map(text):

data = json.loads(text.tobytes())

return np.array(data['article']), np.array(data['summarization'])

def pad(article):

tokenized = tokenizer(text=article, truncation=True, max_length=max_seq_len-max_summary_len)

return tokenized['input_ids']

dataset = dataset.map(read_map, 'text', ['article', 'summary'])

dataset = dataset.map(pad, 'article', ['input_ids'])

dataset = dataset.batch(batch_size)

return dataset

batched_test_dataset = process_test_dataset(test_dataset, tokenizer, batch_size=1)

print(next(batched_test_dataset.create_tuple_iterator(output_numpy=True)))

# 修改部分代码

# model = GPT2LMHeadModel.from_pretrained('./checkpoint/gpt2_summarization_epoch_0.ckpt', config=config)

model = GPT2LMHeadModel.from_pretrained('./gpt2_summarization/checkpoint-45', config=config)

由于训练数据量少,epochs数少且tokenizer并未使用gpt tokenizer等因素,模型推理效果会较差。

model.set_train(False)

model.config.eos_token_id = model.config.sep_token_id

i = 0

for (input_ids, raw_summary) in batched_test_dataset.create_tuple_iterator():

output_ids = model.generate(input_ids, max_new_tokens=50, num_beams=5, no_repeat_ngram_size=2)

output_text = tokenizer.decode(output_ids[0].tolist())

print(output_text)

i += 1

if i == 1:

break

运行结果

鲲鹏昇腾开发者社区是面向全社会开放的“联接全球计算开发者,聚合华为+生态”的社区,内容涵盖鲲鹏、昇腾资源,帮助开发者快速获取所需的知识、经验、软件、工具、算力,支撑开发者易学、好用、成功,成为核心开发者。

更多推荐

已为社区贡献2条内容

已为社区贡献2条内容

所有评论(0)